The training data layer for Physical AI.

High-fidelity 3D scenes and physics-grounded assets, delivered at the scale foundation models and robotics simulators actually need.

We build the data that Physical AI trains on.

The world's leading robotics and foundation model teams use Physicl to source simulation-ready environments, physics-tagged assets, and ground truth data at scale.

Request your spot in our Private Beta.

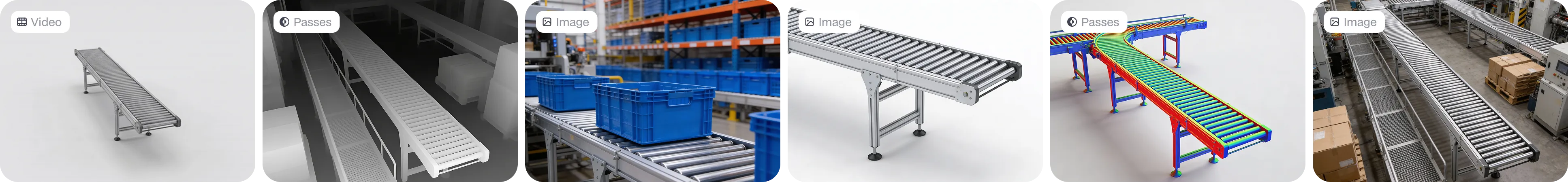

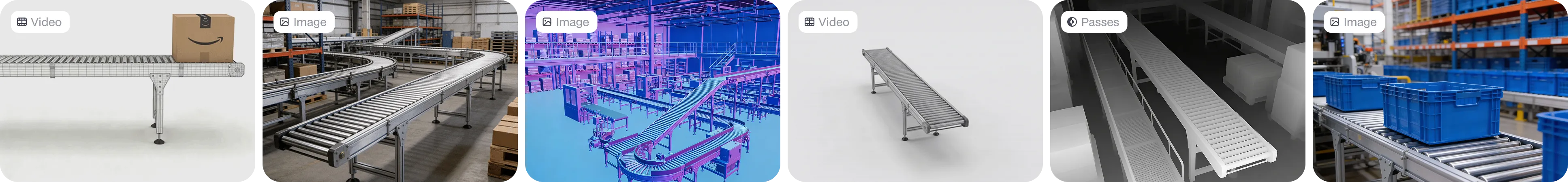

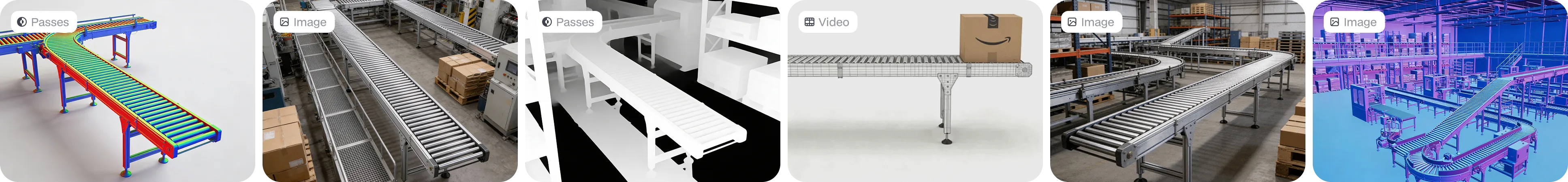

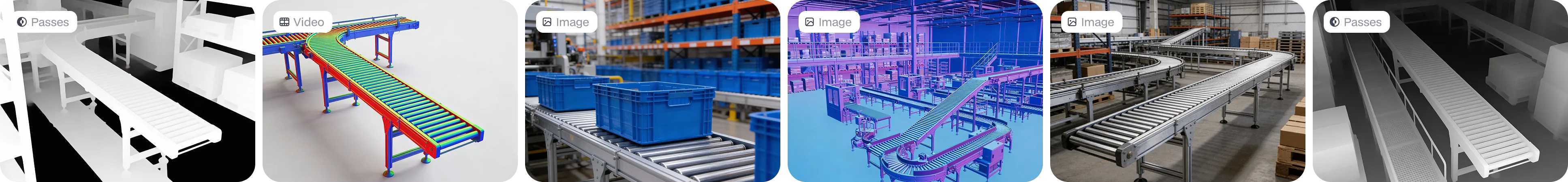

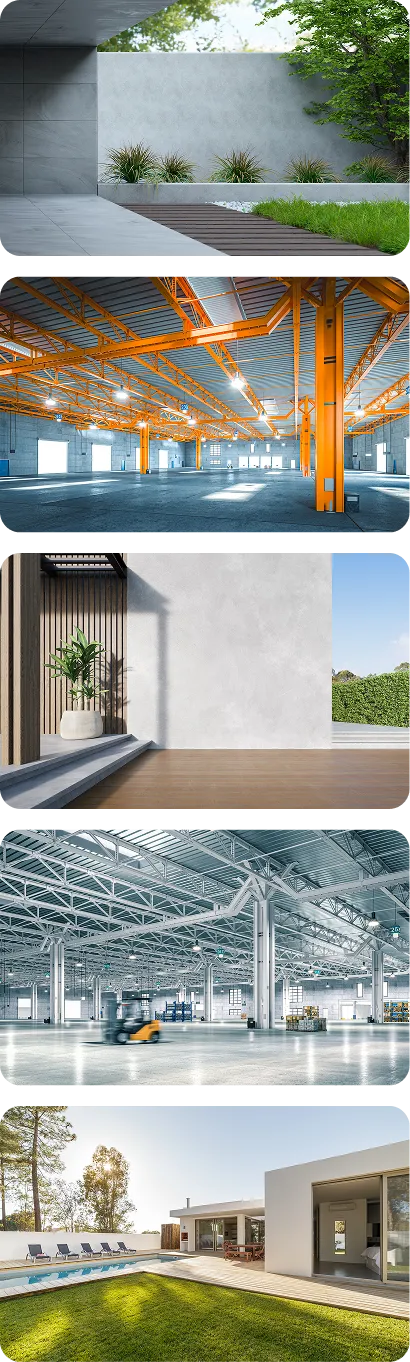

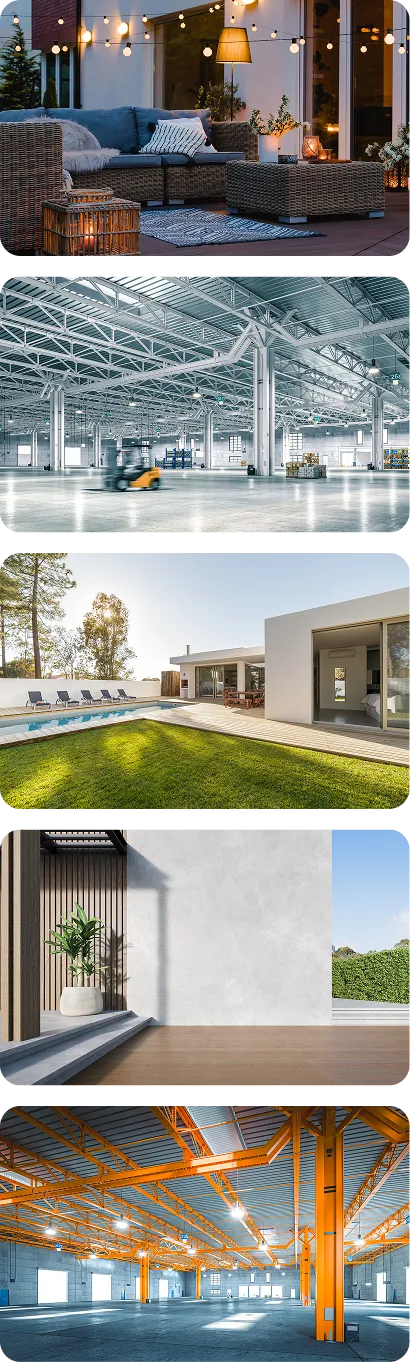

Simulation-ready 3D Assets & Environments

Parametric scene generation

Any input → structured, physics-tagged 3D

Infinite scene permutations

USD, URDF & render outputs

assets at launch

May 1st

July 1st

2027

One platform. Every step of the pipeline.

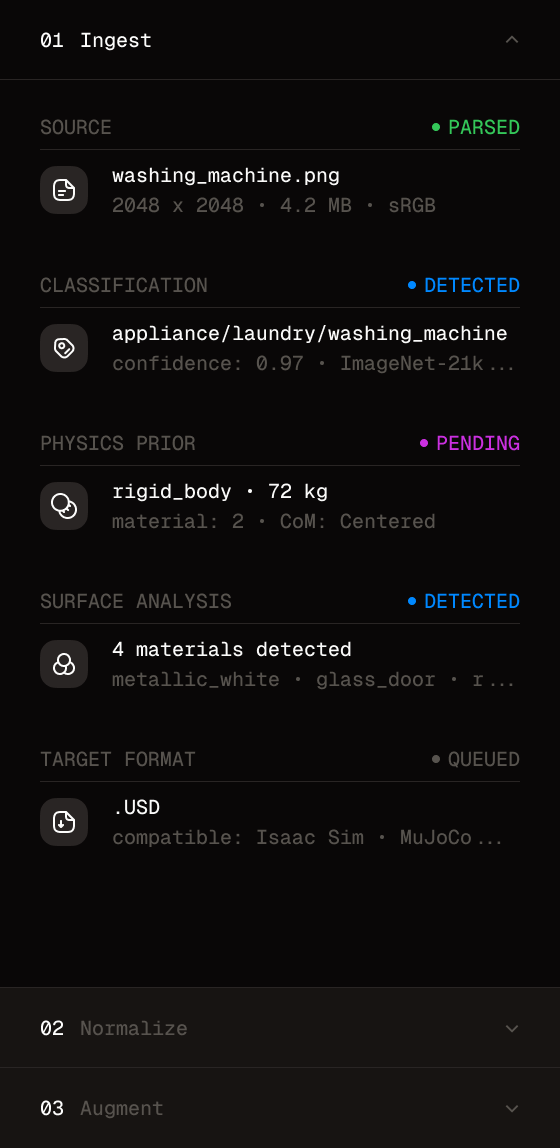

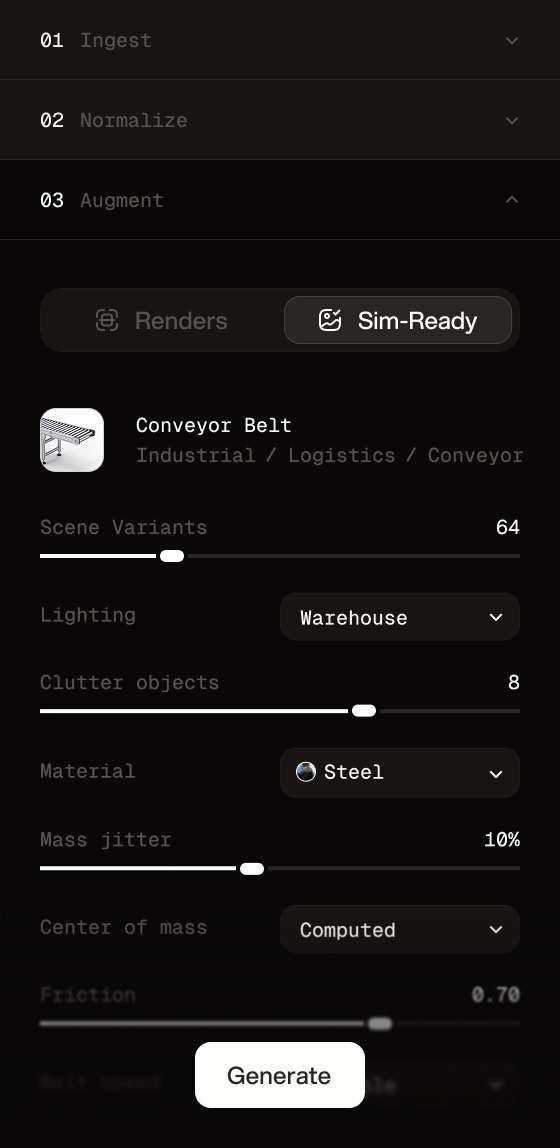

Ingest → normalize → augment → validate → deploy. End-to-end data infrastructure, purpose-built for Physical AI training workflows.

Import any image, video, scan, 3D... as input

- FormatUSD • 48K Tris • 3 LOD

- PhysicsRigid • 72kg • Convex_null

- Materials4PBR • Usd_Preview_Surface

- SemanticsGraspable: false

Interactive: door - Sim targetIsaac Sim • MuJoCo • Habitat

- Graph142 instances • 38 unique • 5 level

- Physicsstatic + dynamic

Per-object collision - Nav meshWalkable 186m2 • Auto-generated

- Semantics12 labels • C0C0-compatible

- Sim targetIsaac Sim • Omniverse • Habitat

Physical AI has a data problem.

Robotics models and world models need massive amounts of physically accurate 3D training data. Existing sources are fragmented, unlicensed, or not sim-ready. We fix that.

.webp)

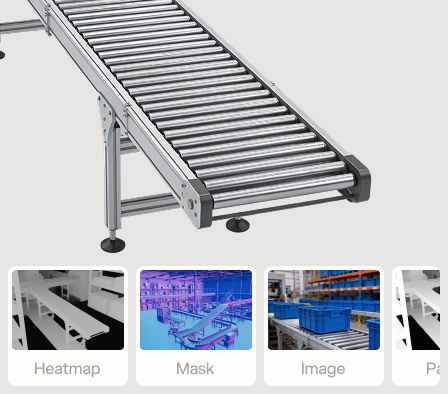

98% sim-ready, out of the box.

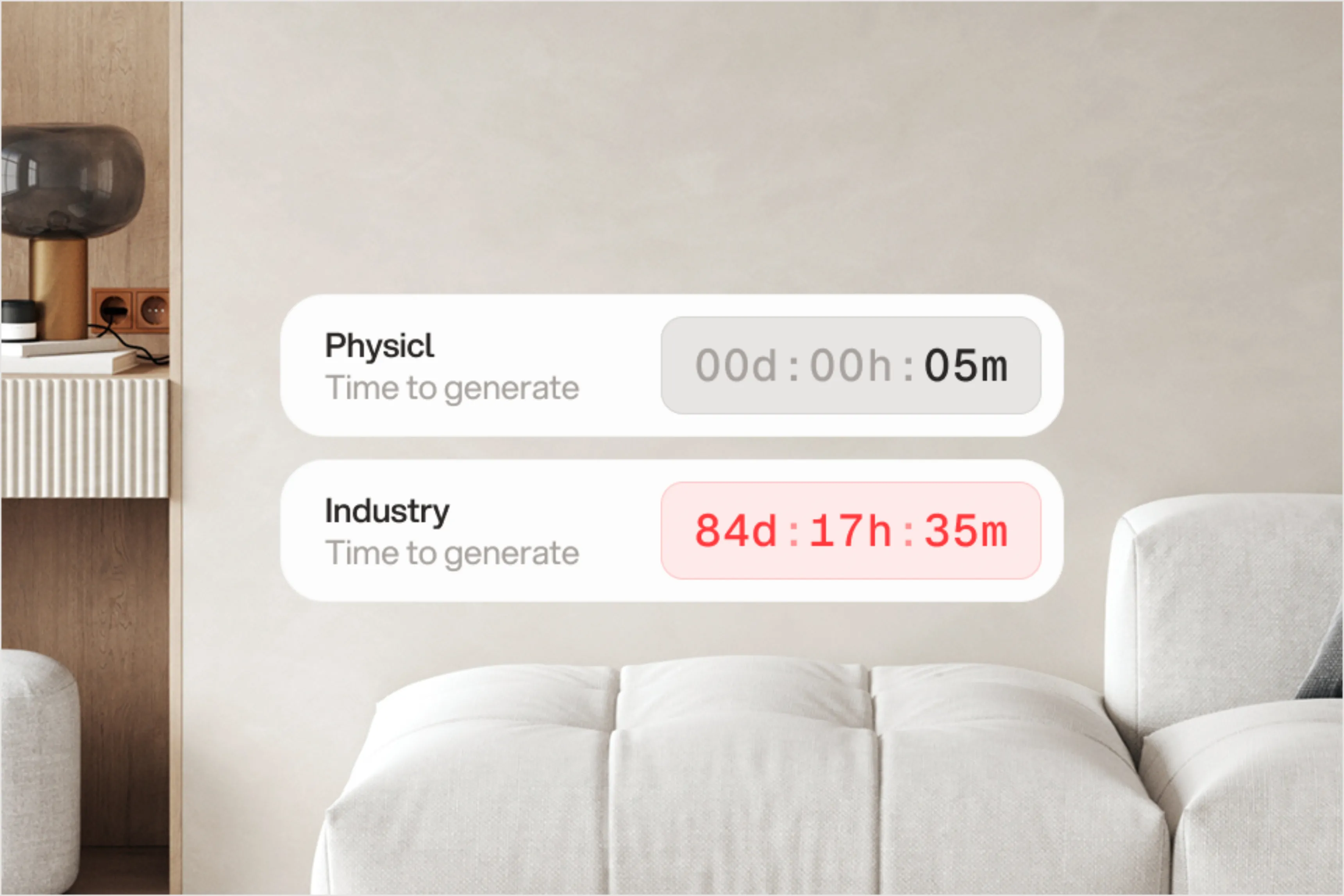

Industry-standard assets require months of cleanup before they can run in a simulator. Physicl assets arrive physics-tagged, collision-meshed, and validated — ready to load.

Stop waiting on data. Start iterating.

Traditional 3D production pipelines take months. Physicl compresses that into minutes — so your training loop doesn't wait on your data pipeline.

Manual physics tagging takes weeks. Ours is automatic.

Physicl deduces friction, mass, and collision data directly from geometry and material properties — no manual annotation, no approximation.

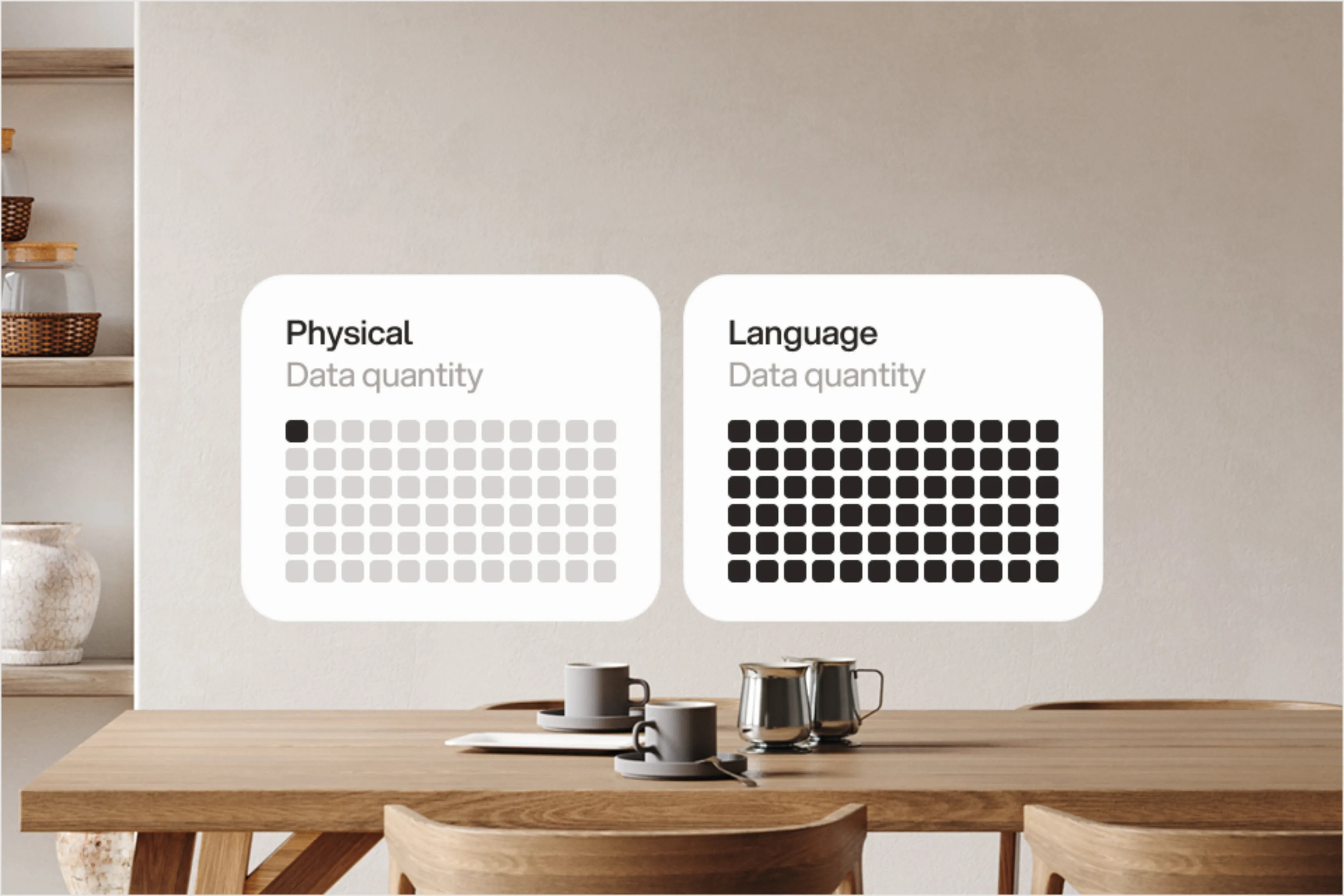

The data Physical AI is missing, at the scale it needs.

Robotics models and world models require orders of magnitude more diverse 3D environments than currently exist. We generate them on demand.

Built for the teams pushing Physical AI forward.

Same infrastructure. Purpose-built data surfaces for robotics simulation and foundation model training.

Robotics teams.

Physics-tagged environments, manipulation scenarios, and edge-case diversity — for training navigation, grasping, and long-horizon tasks in simulation.

Foundation models teams.

Massive-scale 3D scene libraries, clean licensing, and ground truth alignment — for training world models and spatially grounded generative AI.

Physics is derived, not estimated.

We build a parametric model for every category. The 3D asset is a derivative of that model — so geometry and materials are fully known, and physics properties are mathematically deduced, not approximated.

Data Model

10k+ Category ontology

Parametric Model

Structure + Constraints

3D Asset

Parametric parts + Materials

Physics Properties

Exact from known inputs

10,000+ expert validators.

Human quality at AI scale.

Every asset passes through a global network of 3D specialists, physics reviewers, and simulation engineers — with a 98% QC pass rate and three levels of RLHF validation.

RLHF-as-a-Service

Expert Validation

Physics QC

98% Pass Rate

Expert Validationrs

Assets / month

QC Pass Rate

RLHF Levels

Official Partnership

•

Since Jan. 2025

Get the training data your models need.

Meta, Adobe, and World Labs use Physicl to source simulation-ready environments and physics-tagged assets at scale. Request access or talk to the team.